What is the AI TRiSM framework?

The AI TRiSM framework stands for Artificial Intelligence Trust, Risk, and Security Management. It helps organizations ensure their AI systems are transparent, ethical, secure, and compliant. The framework is designed to reduce risks such as bias, data breaches, and unexplainable AI outputs.

Read the full blog on AI TRiSM

Why is the AI TRiSM framework important?

The AI TRiSM framework is essential for organizations using AI because it:

- Prevents biased or discriminatory decisions

- Ensures compliance with evolving regulations like the EU AI Act and NIST AI RMF

- Protects personal data

- Builds stakeholder and customer trust

Who should be responsible for implementing the AI TRiSM framework?

Key stakeholders include:

- Chief Data Officers (CDOs) for ethical data use

- Chief Privacy Officers (CPOs) for privacy compliance

- Chief Information Security Officers (CISOs) for AI security

- Heads of AI/ML for model lifecycle governance

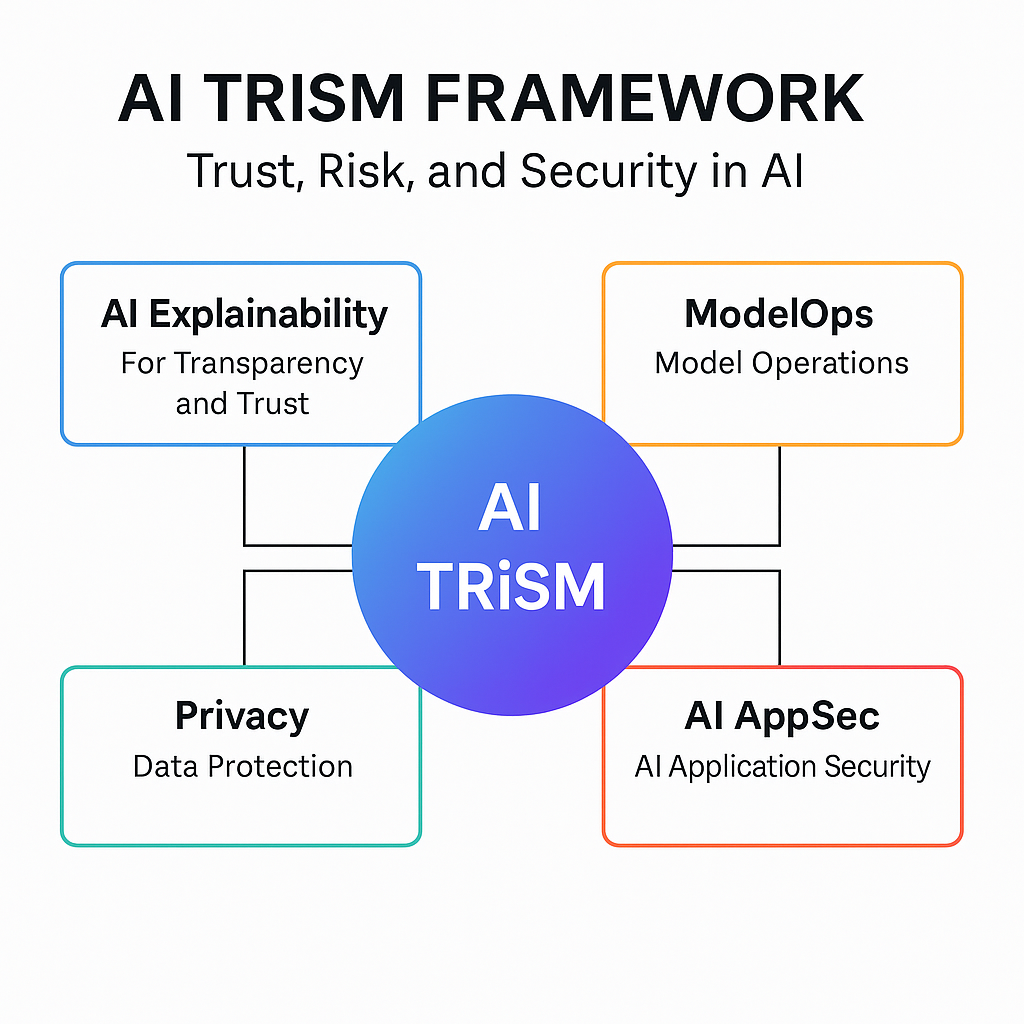

What are the components of the framework?

The framework is built around four pillars:

- AI Explainability: Makes AI decisions transparent and interpretable

- ModelOps: Continuously monitors model performance and bias

- AI Application Security (AI AppSec): Protects models from adversarial threats

- Privacy: Applies techniques like data anonymization and federated learning to secure sensitive data

How does the AI TRiSM framework protect data privacy?

The TRiSM protects data privacy through:

- Anonymization: Removes identifiable data

- Encryption: Protects data in transit and at rest

- Federated Learning: Trains AI models locally, without centralizing sensitive data

Does the framework help prevent AI bias?

Yes, it uses fairness audits like:

- Disparate impact analysis

- Equalized odds testing

These techniques help ensure AI decisions don’t discriminate against any group.

How is the AI TRiSM framework aligned with NIST guidelines?

The framework aligns closely with NIST’s AI Risk Management Framework by promoting:

- Explainability

- Security

- Accountability

- Governance throughout the AI lifecycle

Are there real-world examples of the AI TRiSM framework in action?

Yes. For instance:

- Mastercard uses explainable AI for transparent fraud detection

- JPMorgan Chase built a model risk governance function to ensure fairness and compliance

How can my organization implement this framework?

Start by:

- Auditing your current AI models

- Creating ethical guidelines

- Using tools to track model bias and drift

CybertLabs offers implementation services for AI TRiSM

What are the consequences of not adopting AI TRiSM?

Not implementing this framework can expose organizations to compliance failures, biased outputs, and reputational harm. Without oversight, AI systems may violate privacy laws or produce unfair decisions, especially in sectors like finance, healthcare, or government.

As AI adoption grows, regulators and users expect transparency and accountability. AI TRiSM helps meet these expectations by reducing legal risk, ensuring fairness, and keeping AI aligned with business goals.

Can small organizations benefit from AI TRiSM?

Absolutely. Even small businesses use AI tools like chatbots and analytics, which can introduce risk if unmanaged. The framework offers scalable practices — like explainability and privacy controls — that help SMBs stay compliant and build trust.

It’s an efficient way to adopt AI responsibly, avoid future issues, and compete confidently in an AI-driven landscape.

About CybertLabs and Our Approach to AI TRiSM

CybertLabs is a cybersecurity and risk management company with over 20 years of experience helping government agencies and private-sector organizations stay secure, compliant, and mission-ready. Our team has worked with agencies like the IRS and Department of Treasury on advanced projects involving Zero Trust architecture, FISMA compliance, and enterprise security modernization.

We now bring that same expertise to artificial intelligence by helping organizations implement this framework. Whether you need help evaluating AI bias, setting up model monitoring, or aligning with NIST’s AI Risk Management Framework, CybertLabs delivers solutions that make your AI secure, transparent, and accountable.

Our services include:

- End-to-end AI model risk assessments

- AI governance framework design

- Privacy and data protection integration

- Real-time model audit and monitoring solutions

If you’re looking for a trusted partner to help you adopt AI responsibly and reduce risk, CybertLabs can help you build a strong, future-proof AI program from day one.

Learn more at cybertlabs.com